Data Engineering

Your Team Can Trust

We build the data pipelines and infrastructure that keep your business running smoothly, so your analysts spend time on insights, not fixing broken data feeds.

Data Engineering Services India:

Built to Power Your Business

We build the plumbing that makes everything else possible: reliable pipelines, clean data, and infrastructure your data scientists and analysts can actually trust.

ETL & ELT Pipeline Development

Custom data pipelines that extract from any source (databases, APIs, event streams, files), transform reliably, and load into your warehouse or lake with full auditability and error handling.

Data Warehouse Design

Dimensional modelling, schema design, and warehouse setup on Snowflake, BigQuery, AWS Redshift, or Databricks. We design for query performance, cost control, and long-term maintainability.

Real-Time Streaming

When your business can't wait for a nightly batch job, we build streaming pipelines that process data as it arrives, powering live dashboards, fraud detection, IoT monitoring, and real-time personalisation.

Data Lake Architecture

We design and build scalable data lakes on AWS, GCP, or Azure, structured in layers so raw data stays intact, clean data is easy to query, and your team can always trace where a number came from.

Data Quality & Governance

We set up automated checks that catch bad data before it reaches your dashboards or models. Your analysts stop asking "can I trust this number?" and start using data with confidence.

Cloud Data Infrastructure

Infrastructure-as-code setup for your entire data platform on AWS, GCP, or Azure. Covers compute, storage, networking, access controls, cost alerts, and monitoring. Reproducible, version-controlled, and auditable.

Data Engineering for Teams

That Need Their Data to Just Work

We have built data infrastructure for e-commerce platforms, transport networks, healthcare systems, financial services, SaaS products, and media companies. Every implementation is shaped around what the business actually needs to run on that data.

E-Commerce

- Real-time inventory and order data pipelines

- Multi-seller data integration and reconciliation

- Customer behaviour event streaming

- Sales and returns reporting infrastructure

Transport and Logistics

- Real-time GPS and route data processing

- Multi-modal transport data integration

- Fleet telematics and event streaming

- On-time performance and delay analytics pipelines

Finance

- Trade and transaction data pipelines

- Regulatory reporting data flows

- Risk data aggregation infrastructure

- Audit-ready data lineage and governance

Healthcare

- HIPAA-compliant data lake architecture

- EHR and clinical data integration pipelines

- Patient event streaming and monitoring feeds

- Cross-system data quality and reconciliation

SaaS and Product

- User activity and event tracking pipelines

- Feature usage and product analytics infrastructure

- Multi-tenant data isolation architecture

- Usage-based billing data flows

Media and Content

- Real-time content consumption event streaming

- Ad impression and click data pipelines

- Audience segmentation data infrastructure

- Content recommendation data feeds

TranziGo: Mobility as a Service Platform

TranziGo needed real-time data from over 40 transport providers including buses, trains, bikes, scooters, and ride shares, all unified into a single experience. We built the data infrastructure that makes that possible: live GPS feeds, multi-modal event streams, and provider integrations that give commuters accurate, up-to-the-second information across every transport type in the app.

Need a reliable data pipeline?

Tell us about your data sources and what you need to do with them. We will come back within one business day with an honest scope, timeline, and cost estimate.

The Data Engineering

Stack We Trust

Industry-standard tools selected for reliability, performance, and the ability for your team to maintain and extend the work after we hand it over.

From Architecture Design

to Monitored Production

We follow a disciplined process that minimises risk at each step, designing for the data volumes you'll have in two years, not just today.

Architecture Design

Map data sources, volumes, and latency requirements. Design the right architecture, lambda, kappa, medallion, before writing a single line of code.

Data Ingestion

Connect all sources (databases, event streams, SaaS APIs, files) with idempotent, resumable ingestion that handles failures gracefully.

Transformation & Modelling

Build dbt models, Spark jobs, or streaming transformations that produce clean, consistent, documented data products your analysts can rely on.

Orchestration

Schedule, monitor, and alert on pipeline execution. Dependency management, SLA tracking, and automatic retry logic, your pipelines run themselves.

Monitoring & Governance

Data quality checks, lineage documentation, cost alerts, and performance dashboards. You always know when something breaks, before your users do.

Data Engineering FAQ

Frequently asked questions from data teams, CTOs, and product leaders considering a data engineering engagement.

Managed services (Fivetran, Airbyte, Stitch) work well for standard SaaS-to-warehouse pipelines. You need custom data engineering when you have complex business logic in transformations, non-standard sources, real-time requirements, high data volumes that make managed services expensive, or compliance constraints. We can help you make this call objectively before you commit to either path.

We design data pipelines with encryption at rest and in transit, column-level masking for PII, role-based access control, and full audit logging from day one. For GDPR, HIPAA, or PCI-DSS requirements, we document data flows and implement the required controls as part of the architecture, not as an afterthought.

Yes. We frequently migrate legacy SQL Server SSIS packages, custom Python scripts, or monolithic ETL jobs to modern, observable, orchestrated pipelines. We use a strangler-fig approach, new pipelines run in parallel to validate output before cutting over, so migrations are low-risk and your business data is never disrupted.

Every pipeline we build includes automated data quality tests, anomaly detection on key metrics, SLA alerting, and runbooks for common failure modes. We instrument everything with logs and metrics, set up PagerDuty or Slack alerts for on-call, and write documentation that allows your team to diagnose and fix issues without needing us on a call.

Cloud data costs can spiral quickly with poor architecture. We design for cost efficiency from the start, right-sizing compute, using columnar storage formats, partitioning tables correctly, setting up query cost budgets, and implementing data retention policies. We also review and optimise existing infrastructure for clients whose bills have grown unexpectedly.

Ready to Build Your

Data Platform?

Tell us about your project and we'll come back within one business day with a plan, a timeline, and an honest scope estimate. No pressure, no fluff.

Ideas, Guides, and

Industry Perspectives

How Much Does AI Development Cost in India? (2026 Guide)

A complete breakdown of AI development costs in India in 2026. Chatbots, ML models, LLM integration, and full AI products.…

Read Article

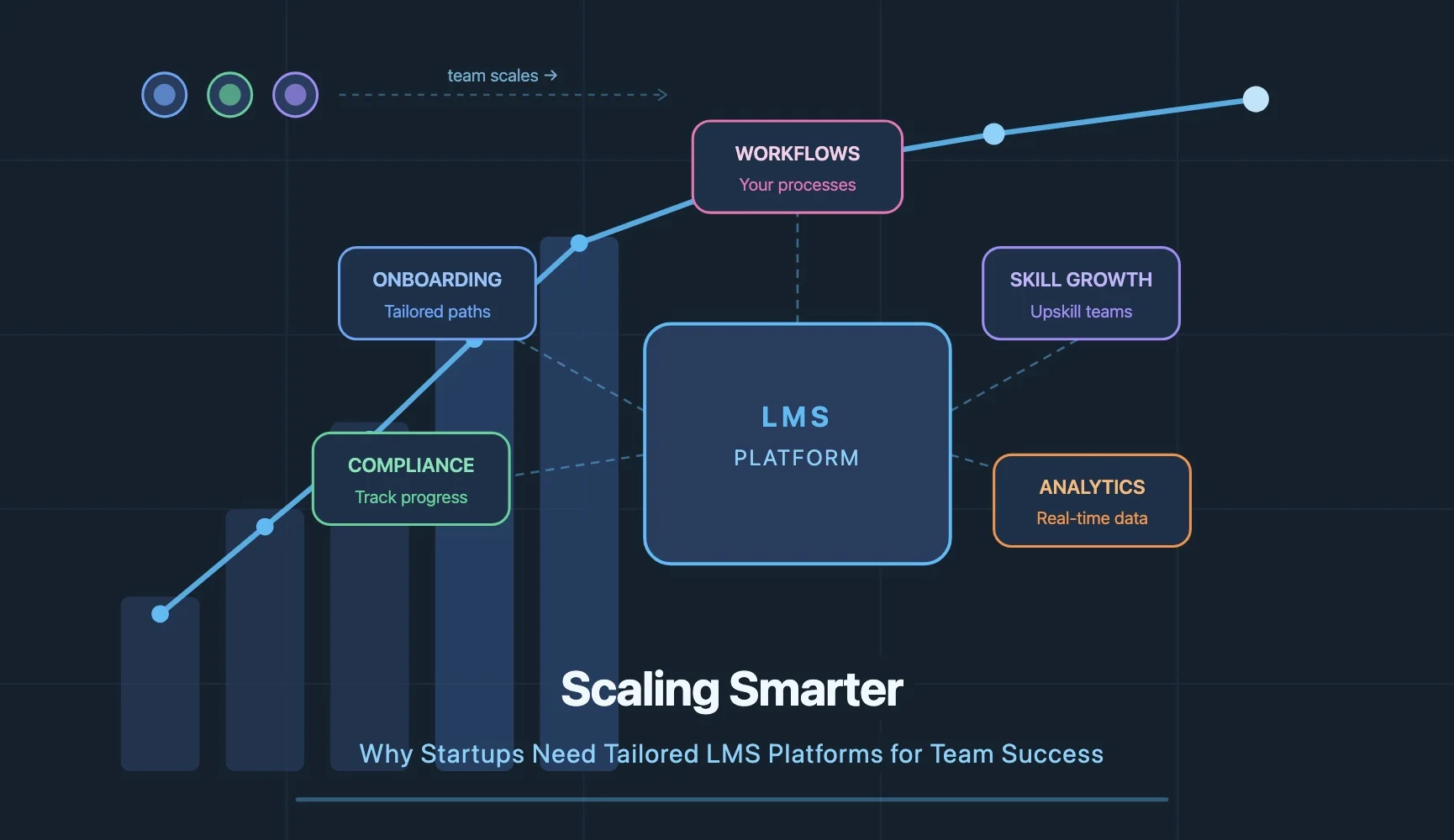

Scaling Smarter: Why Startups Need Tailored LMS Platforms for Team Success

Most startups do not have a training problem. They have a scaling problem. A tailored LMS built around your actual…

Read Article

How AI Development Can Transform Your Business in 2026

AI development is transforming how businesses operate in 2026. Five areas creating real value: process automation, smarter decisions, personalisation at…

Read ArticleLet's Talk About

Your Project

Have a question or ready to start? Drop us a message and we'll get back to you within one business day.

A118, Sector 63

Noida, UP 201301

304 Krishna Classic, A.B Road

Indore, MP 452008